The worst defects are the ones that become incidents in production. You might hear about the cost of defects in various stages of the SDLC, but I’ve seen incidents that cost over $1M an hour in production downtime. Some QE teams might avoid looking at these because the fire is too hot. If you are brave, then read on!

One of the best ways to learn about Operations and testing gaps is to be personally accountable.

As a QA leader and a Support Leader, I had the opportunity to see the issues in production as specific items that my team missed. Here is the approach that I took to improving the quality engineering that might help you out.

First, I read a bunch of articles and best practices on Root Cause Analysis. I also have my own personal guru on all things software project related. My wife is a PMP, PMI, ABC, and DEF. She doesn’t blog, and I’m not volunteering her consulting services, but if you ever come over for dinner sometime she is glad to talk shop over a plate of BBQ.

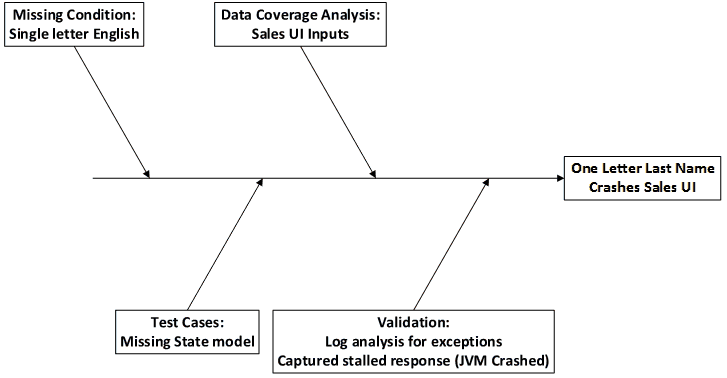

From that I decided that I really liked the 5 Why’s and Ishikawa fishbone diagram. So, using that principle I moved on.

Second, I created a subset of incidents that were related to the projects that my team owned quality engineering. This is not to say that we could help everywhere, but it is more logical to do a root cause analysis on our subset. Then, as a slight variation on the 5 Why’s, I asked them to determine whether the incident was in this grouping: Missing test case << test plan << test strategy

So from that grouping concept we came up with the overarching areas for test strategy opportunities:

- Configuration

- Deployment

- Data

- Code

- Performance

Note that this was a difficult discussion. The team had a deep focus on functional testing and performance testing. The errors in configuration and deployment had previously been out of scope. In my more recent experiences as a QA consultant/architect I have rarely seen a test organization that looks at these two areas. So, our analysis and categorization of this as an issue was very innovative at the time.

Third, for the highest priority incidents we dove deeper into each one to determine what was actually missing. Once again, these faults had significant business impact. In prior test responsibility discussions, the team had been primarily focused upon the requirements. I have seen this fallback position recur at many other QA organizations too.

Consider another point of view, if you were to ask the business owners, or even the customers, they would believe that QA owns anything related to quality. For example, when somebody misses a flight due to an airline computer glitch, do they think that it was a missing requirement or poor quality? I am always disappointed when I hear a QA leader pass the blame without taking responsibility. We should be perfectionists, and continue to strive for ways to create the best solution possible.

We need to optimize our resources to the maximum effect. Thus, if we can find the largest strategic gaps, then we can improve the solution in the most effective manner. Perhaps we can find a strategic hole that requires a more clearly defined requirement, or perhaps, as shown in the example below, the requirements are implicit.

So, in this example imagine a major fault where entering a one-character last name into a user interface crashes the host JVM. This is a list of the Why’s correlated into an Ishikawa fishbone diagram and the categories we created for our data: